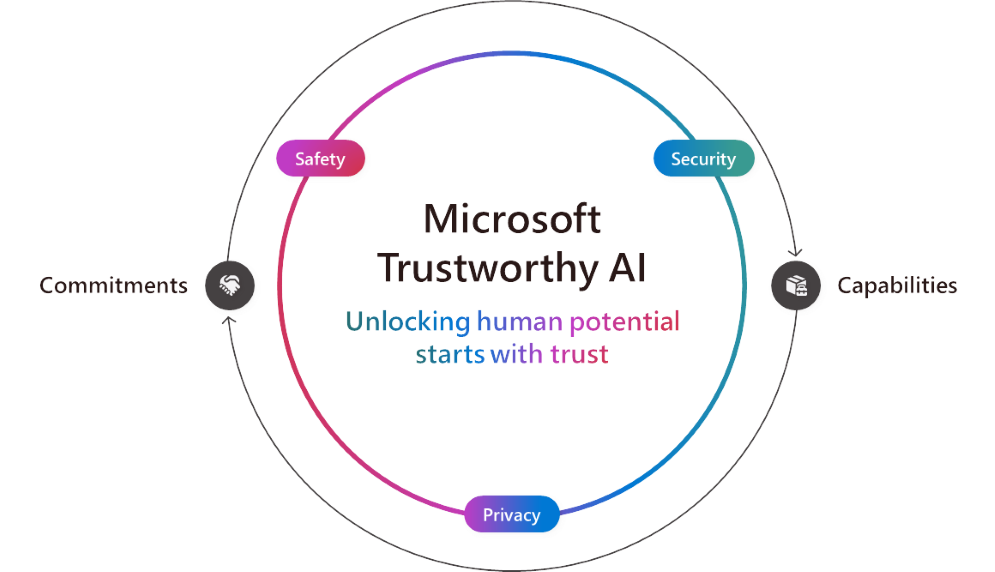

As AI advances, we all have a role to play to unlock AI’s positive impact for organizations and communities around the world. That’s why we’re focused on helping customers use and build AI that is trustworthy, meaning AI that is secure, safe and private.

At Microsoft, we have commitments to ensure Trustworthy AI and are building industry-leading supporting technology. Our commitments and capabilities go hand in hand to make sure our customers and developers are protected at every layer.

Building on our commitments, today we are announcing new product capabilities to strengthen the security, safety and privacy of AI systems.

Security. Security is our top priority at Microsoft, and our expanded Secure Future Initiative (SFI) underscores the company-wide commitments and the responsibility we feel to make our customers more secure. This week we announced our first SFI Progress Report, highlighting updates spanning culture, governance, technology and operations. This delivers on our pledge to prioritize security above all else and is guided by three principles: secure by design, secure by default and secure operations. In addition to our first party offerings, Microsoft Defender and Purview, our AI services come with foundational security controls, such as built-in functions to help prevent prompt injections and copyright violations. Building on those, today we’re announcing two new capabilities:

- Evaluations in Azure AI Studio to support proactive risk assessments.

- Microsoft 365 Copilot will provide transparency into web queries to help admins and users better understand how web search enhances the Copilot response. Coming soon.

Our security capabilities are already being used by customers. Cummins, a 105-year-old company known for its engine manufacturing and development of clean energy technologies, turned to Microsoft Purview to strengthen their data security and governance by automating the classification, tagging and labeling of data. EPAM Systems, a software engineering and business consulting company, deployed Microsoft 365 Copilot for 300 users because of the data protection they get from Microsoft. J.T. Sodano, Senior Director of IT, shared that “we were a lot more confident with Copilot for Microsoft 365, compared to other large language models (LLMs), because we know that the same information and data protection policies that we’ve configured in Microsoft Purview apply to Copilot.”

Safety. Inclusive of both security and privacy, Microsoft’s broader Responsible AI principles, established in 2018, continue to guide how we build and deploy AI safely across the company. In practice this means properly building, testing and monitoring systems to avoid undesirable behaviors, such as harmful content, bias, misuse and other unintended risks. Over the years, we have made significant investments in building out the necessary governance structure, policies, tools and processes to uphold these principles and build and deploy AI safely. At Microsoft, we are committed to sharing our learnings on this journey of upholding our Responsible AI principles with our customers. We use our own best practices and learnings to provide people and organizations with capabilities and tools to build AI applications that share the same high standards we strive for.

Today, we are sharing new capabilities to help customers pursue the benefits of AI while mitigating the risks:

- A Correction capability in Microsoft Azure AI Content Safety’s Groundedness detection feature that helps fix hallucination issues in real time before users see them.

- Embedded Content Safety, which allows customers to embed Azure AI Content Safety on devices. This is important for on-device scenarios where cloud connectivity might be intermittent or unavailable.

- New evaluations in Azure AI Studio to help customers assess the quality and relevancy of outputs and how often their AI application outputs protected material.

- Protected Material Detection for Code is now in preview in Azure AI Content Safety to help detect pre-existing content and code. This feature helps developers explore public source code in GitHub repositories, fostering collaboration and transparency, while enabling more informed coding decisions.

It’s amazing to see how customers across industries are already using Microsoft solutions to build more secure and trustworthy AI applications. For example, Unity, a platform for 3D games, used Microsoft Azure OpenAI Service to build Muse Chat, an AI assistant that makes game development easier. Muse Chat uses content-filtering models in Azure AI Content Safety to ensure responsible use of the software. Additionally, ASOS, a UK-based fashion retailer with nearly 900 brand partners, used the same built-in content filters in Azure AI Content Safety to support top-quality interactions through an AI app that helps customers find new looks.

We’re seeing the impact in the education space too. New York City Public Schools partnered with Microsoft to develop a chat system that is safe and appropriate for the education context, which they are now piloting in schools. The South Australia Department for Education similarly brought generative AI into the classroom with EdChat, relying on the same infrastructure to ensure safe use for students and teachers.

Privacy. Data is at the foundation of AI, and Microsoft’s priority is to help ensure customer data is protected and compliant through our long-standing privacy principles, which include user control, transparency and legal and regulatory protections. To build on this, today we’re announcing:

- Confidential inferencing in preview in our Azure OpenAI Service Whisper model, so customers can develop generative AI applications that support verifiable end-to-end privacy. Confidential inferencing ensures that sensitive customer data remains secure and private during the inferencing process, which is when a trained AI model makes predictions or decisions based on new data. This is especially important for highly regulated industries, such as health care, financial services, retail, manufacturing and energy.

- The general availability of Azure Confidential VMs with NVIDIA H100 Tensor Core GPUs, which allow customers to secure data directly on the GPU. This builds on our confidential computing solutions, which ensure customer data stays encrypted and protected in a secure environment so that no one gains access to the information or system without permission.

- Azure OpenAI Data Zones for the EU and U.S. are coming soon and build on the existing data residency provided by Azure OpenAI Service by making it easier to manage the data processing and storage of generative AI applications. This new functionality offers customers the flexibility of scaling generative AI applications across all Azure regions within a geography, while giving them the control of data processing and storage within the EU or U.S.

We’ve seen increasing customer interest in confidential computing and excitement for confidential GPUs, including from application security provider F5, which is using Azure Confidential VMs with NVIDIA H100 Tensor Core GPUs to build advanced AI-powered security solutions, while ensuring confidentiality of the data its models are analyzing. And multinational banking corporation Royal Bank of Canada (RBC) has integrated Azure confidential computing into their own platform to analyze encrypted data while preserving customer privacy. With the general availability of Azure Confidential VMs with NVIDIA H100 Tensor Core GPUs, RBC can now use these advanced AI tools to work more efficiently and develop more powerful AI models.

Achieve more with Trustworthy AI

We all need and expect AI we can trust. We’ve seen what’s possible when people are empowered to use AI in a trusted way, from enriching employee experiences and reshaping business processes to reinventing customer engagement and reimagining our everyday lives. With new capabilities that improve security, safety and privacy, we continue to enable customers to use and build trustworthy AI solutions that help every person and organization on the planet achieve more. Ultimately, Trustworthy AI encompasses all that we do at Microsoft and it’s essential to our mission as we work to expand opportunity, earn trust, protect fundamental rights and advance sustainability across everything we do.

Related:

Commitments

- Security: Secure Future Initiative

- Privacy: Trust Center

- Safety: Responsible AI Principles

Capabilities

- Security: Security for AI

- Privacy: Azure Confidential Computing

- Safety: Azure AI Content Safety

Author: Takeshi Numoto

Blog Article: Here